SIMPLY

BETTER

SECURITY

SIMPLY BETTER

SECURITY

The original cloud-based access control solution trusted by millions. Protect your building, employees, visitors, customers, residents and data with Brivo.

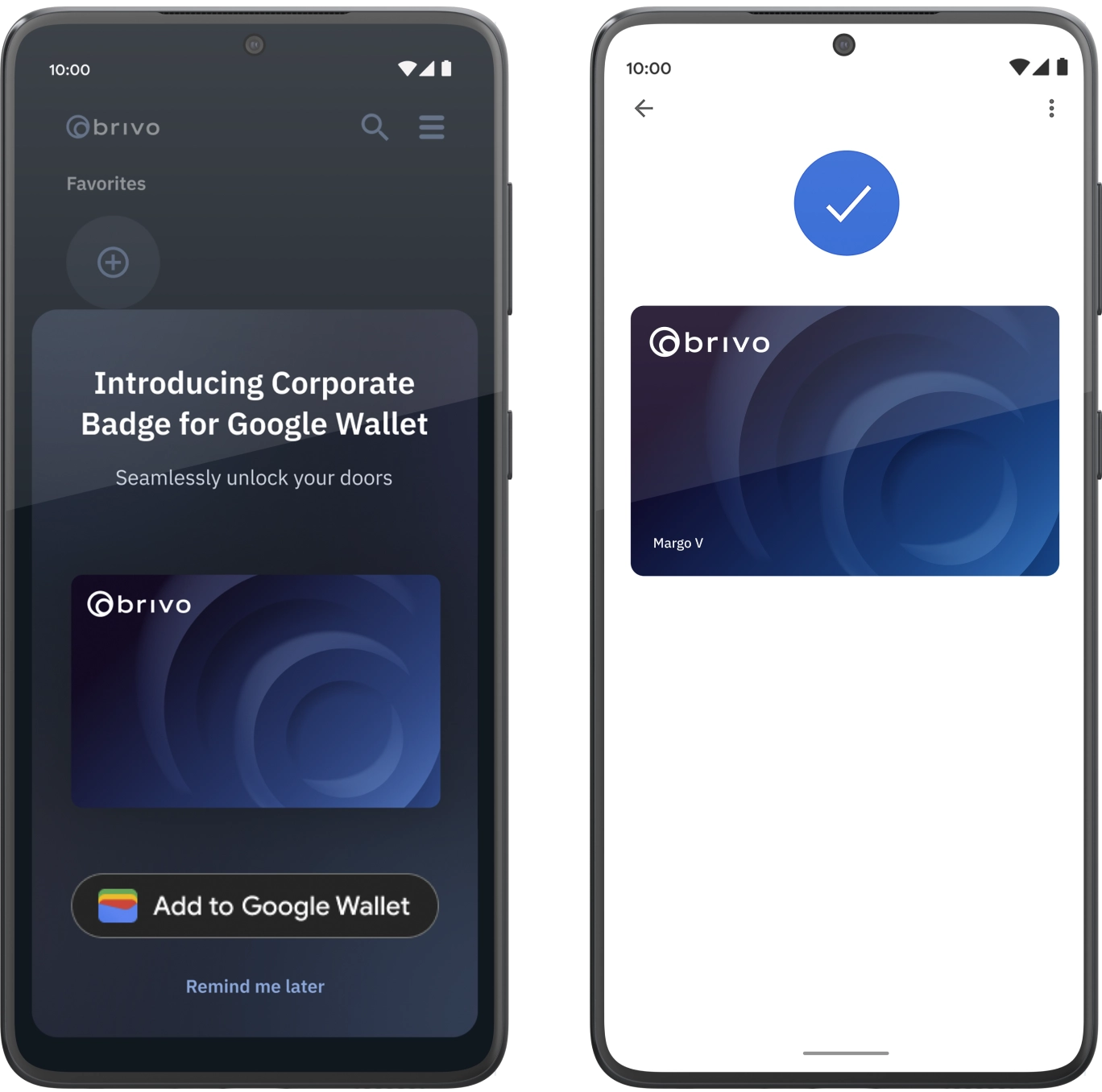

More Convenience, Powered by Brivo Wallet Pass

Elevate your access experience with an even easier way to get around the office with

highly secure mobile credentials.

Employee Badge in Apple Wallet

Now on iPhone and Apple Watch

Access Credentials for Android

Using Google Wallet

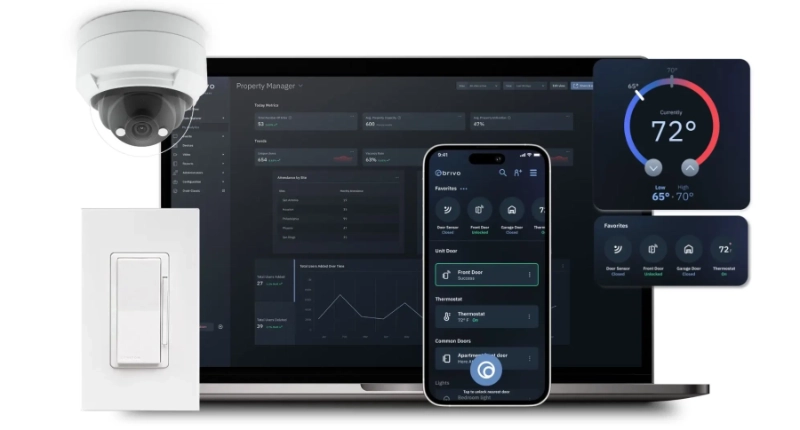

Brivo is the Foundational Platform for Smart Spaces

Explore how Brivo’s cloud-based access control solutions and capabilities create the perfect physical security ecosystem to establish a solid foundation for your smart space

Talk to an Expert

Experience Unparalleled Enterprise Security Transformation with

Brivo Door Station

Experience Unparalleled Enterprise

Security Transformation with

Brivo Door Station

Your All-in-One solution to optimize the door experience

- Video of every access event

- Convenient access with facial authentication

- Sleek, modern design

Cloud-Based Access Control Solutions

for Every Industry

Enterprise

Use our comprehensive Enterprise-grade access control solution to implement an effective and scalable security system. Manage all your assets in one cyber secure place. Connect physical access control with identity and access management to expand data collection and gain better insights into your physical space.

Multifamily

Create a modern smart multifamily community with a security platform designed to provide residents with the amenities they want. Increase property management efficiency with tools like self-guided tours and mobile management. Smart features like thermostats and sensors save costs and increase asset ROI.

CRE

Discover a cloud-based access control system for the smart office and multi-use building. Track occupancy to protect the health-safety of residents, customers, and visitors. Use mobile credentials to create frictionless flow for occupants from the parking garage to the office suite.

Vacation Rental

Revolutionize your vacation rentals with Brivo, the complete solution that seamlessly blends smart automation, streamlined property management, and exceptional guest experiences, all delivered by a single, trusted provider.

Brivo is Shaping the Future

Millions of Users Worldwide Trust Brivo

The original cloud-based access control solution

Our Extensive Open API Integrates with Numerous Platforms

Create a seamless customer experience and holistic physical security ecosystem. Connect right away to various solutions including identity management, intercoms, biometrics, and more.

Featured In